A Real Account of Deep Fakes

Laws regulating pornographic deepfakes are written to prohibit “digital forgeries,” “false” images, or media “indistinguishable” from “authentic” recordings. Yet the typical anti-deepfake law covers materials that aren’t forgeries, aren’t false, and that reasonable observers can easily distinguish from authentic recordings. Though drafted as if they regulate statements of fact, anti-deepfake laws actually target certain outrageous depictions per se—and rightly so, because pornographic deepfakes cause harm irrespective of their truth or falsity. However, the inapposite language of facts results in statutes with crucial ambiguities. Moreover, because anti-deepfake laws ban outrageous depictions irrespective of the factual assertions they make, they differ fundamentally from the information-privacy and defamation regimes that they superficially resemble. Instead of regulating true or false disclosures of fact, anti-deepfake laws fall into a distinct and disfavored category: laws that forbid expression because it is outrageous.

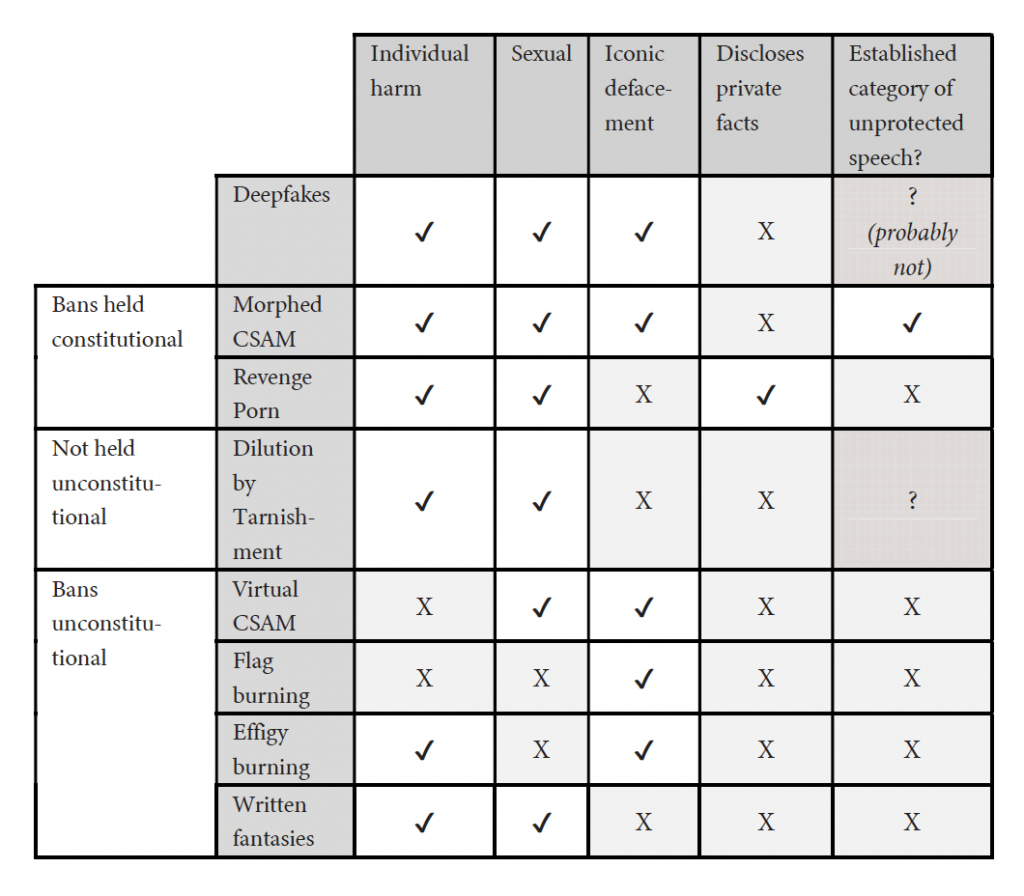

This Article begins by excavating the internal tension of anti-deepfake statutes and explaining the laws’ theoretical underpinnings. It shows that the laws mean to redress highly offensive appropriations of likeness, but employ the incommensurable vocabulary of a different dignitary harm: the circulation of facts about persons. The Article then uses semiotic theory to explain how deepfakes differ from the media they mimic and why those differences matter legally. Photographs and video recordings record events. Deepfakes merely depict them. Justifications for regulating records do not necessarily justify regulating depictions. Many laws—covering everything from trademark dilution to flag burning to “morphed” child sexual abuse material (CSAM)—have banned outrageous depictions as such. Several remain in effect today. Yet when such bans are challenged, courts mischaracterize imagery to sidestep constitutional scrutiny: Courts pretend fictional depictions are factual records. Anti-deepfake laws resist this dodge. Courts considering these laws will be forced to confront head-on the extent to which a statute may ban outrageous expression as such.

Introduction

“[R]epresentation is reality . . . .”1 Catharine A. MacKinnon, Only Words 29 (1993) (discussing, with approval, an argument attributed to Susanne Kappeler).

Breakthrough technology has made it cheap and easy to synthesize photorealistic images and videos of recognizable individuals. People are using it to generate massive amounts of porn.2 Henry Ajder, Giorgio Patrini, Francesco Cavalli & Laurence Cullen, The State of Deepfakes: Landscape, Threats, and Impact 1 (2019), https://regmedia.co.uk/2019/10/08/deepfake_report.pdf [perma.cc/84G4-LMSA]. See generally Laura Wagner & Eva Cetinic, Perpetuating Misogyny with Generative AI: How Model Personalization Normalizes Gendered Harm, arXiv (May 20, 2025), https://doi.org/10.48550/arXiv.2505.04600. In keeping with colloquial usage, I use “porn,” “pornography,” and “pornographic” to denote depictions of nudity or sexual conduct, but it bears noting that jurists have observed that this vocabulary arguably mischaracterizes nonconsensual, sexualized depictions. Mary Anne Franks, “Revenge Porn” Reform: A View from the Front Lines, 69 Fla. L. Rev. 1251, 1257–58 (2017), cited in People v. Austin, 155 N.E.3d 439, 451 (Ill. 2019).

An AI user needs only a few photographs of his target to generate a pornographic “deepfake” of anyone from an ex-girlfriend to a celebrity.3Hany Farid, Mitigating the Harms of Manipulated Media: Confronting Deepfakes and Digital Deception, PNAS Nexus, July 2025, at 1, 4. To match what appears to be the typical scenario, I use “he/him” pronouns to refer in general to a creator or distributor of nonconsensual, deepfake pornography, and “she/her” pronouns to describe the person depicted. Ajder et al., supra note 2, at 2; Jess Weatherbed, Trolls Have Flooded X with Graphic Taylor Swift AI Fakes, The Verge (Jan. 25, 2024), https://theverge.com/2024/1/25/24050334/x-twitter-taylor-swift-ai-fake-images-trending [perma.cc/N9KT-QAXX].

In early 2024, sexually explicit deepfakes of Taylor Swift gathered tens of millions of views on the social media site X.4Weatherbed, supra note 3.

Recent reporting has documented the wide availability and popularity of AI models calibrated to create pornographic images of specific people.5Emanuel Maiberg, Hugging Face Is Hosting 5,000 Nonconsensual AI Models of Real People, 404 Media (July 15, 2025), https://www.404media.co/hugging-face-is-hosting-5-000-nonconsensual-ai-models-of-real-people/ [perma.cc/RP5Z-VU5L]; Emanuel Maiberg, A16z-Backed AI Site Civitai Is Mostly Porn, Despite Claiming Otherwise, 404 Media (July 15, 2025), https://www.404media.co/a16z-backed-ai-site-civitai-is-mostly-porn-despite-claiming-otherwise/ [perma.cc/6Y98-E6J2]. See generally Wagner & Cetinic, supra note 2.

Women in politics and journalism are being threatened with deepfake pornography that uses their likenesses.6Mark Scott, Deepfake Porn Is Political Violence, Politico (Feb. 8, 2024), https://politico.eu/newsletter/digital-bridge/deepfake-porn-is-political-violence [perma.cc/963K-BELR]; Danielle Keats Citron, Sexual Privacy, 128 Yale L.J. 1870, 1922–23 (2019).

Schoolchildren across the nation are using AI to synthesize naked images of their classmates; two boys in their early teens have been charged with felonies for allegedly doing so.7Caroline Haskins, Florida Middle Schoolers Arrested for Allegedly Creating Deepfake Nudes of Classmates, Wired (Mar. 8, 2024), https://www.wired.com/story/florida-teens-arrested-deepfake-nudes-classmates/ [perma.cc/WJ3F-JC5X]; see also Jason Koebler & Emanuel Maiberg, A High School Deepfake Nightmare, 404 Media (Feb. 15, 2024), https://www.404media.co/email/547fa08a-a486-4590-8bf5-1a038bc1c5a1/ [perma.cc/3QQU-ED9H].

Alarm over pornographic deepfakes has united every state attorney general,8Meg Kinnard, Prosecutors in All 50 States Urge Congress to Strengthen Tools to Fight AI Child Sexual Abuse Images, AP News (Sep. 5, 2023), https://apnews.com/article/ai-child-pornography-attorneys-general-bc7f9384d469b061d603d6ba9748f38a [perma.cc/6XLY-DBBG].

the Biden White House,9Justin Sink, White House Urges Action After ‘Alarming’ Taylor Swift Deepfakes, Bloomberg (Jan. 26, 2024), https://bloomberg.com/news/articles/2024-01-26/white-house-urges-action-after-alarming-taylor-swift-deepfakes [perma.cc/RTU3-F8UP].

and the Trump White House.10Rebecca Shabad, Trump Signs Bill Cracking Down on Explicit Deepfakes, NBC News (May 19, 2025), https://nbcnews.com/politics/white-house/trump-sign-bill-cracking-deepfake-pornography-rcna207693 [perma.cc/GLD4-QFG7].

In May 2025, President Trump signed into law the TAKE IT DOWN Act, a criminal statute that specifically targets pornographic deepfakes of adults.11Tools to Address Known Exploitation by Immobilizing Technological Deepfakes on Websites and Networks Act (TAKE IT DOWN Act), Pub. L. No. 119-12, 139 Stat. 55 (2025) (to be codified at 47 U.S.C. § 223(h)).

Forty-one states have passed similar civil or criminal legislation.12These numbers are accurate as of July 24, 2025, according to Public Citizen’s tracker. See Tracker: State Legislation on Intimate Deepfakes, Public Citizen (July 22, 2025), https://web.archive.org/web/20250725153026/https://citizen.org/article/tracker-intimate-deepfakes-state-legislation [perma.cc/YV8Q-9NF7]. For a systematic analysis of proposed and enacted deepfake-related legislation, see Thomas E. Kadri & Sonja R. West, Deepfake Torts: Emerging Tort Frameworks in U.S. Deepfake Regulation, 18 J. Tort L. 515 (2025).

Anti-deepfake laws are sweeping the nation. This Article focuses on two glaring details that these statutes seldom, if ever, duly acknowledge: Deepfakes are not photographs or video recordings; and often, they don’t even pretend that they are. Anti-deepfake statutes differ from information-privacy and defamation laws in a crucial respect: Information-privacy and defamation law regulate facts, or assertions of fact, about persons. Anti-deepfake laws ban outrageous depictions of persons, irrespective of any factual assertions they make. This difference matters for two reasons. First, legislators who approach deepfakes as a defamation, fraud, or forgery problem or as an information-privacy problem—that is, as a problem of false representations of fact or true disclosures of private facts—end up enacting statutes that may not redress the harms they should redress.

Second, because anti-deepfake statutes ban certain outrageous imagery per se, irrespective of any factual assertions the imagery makes, the rationales that justify defamation and information-privacy regimes do not justify these laws. Courts applying the First Amendment regard bans on offensive expressions of opinion with a distinct skepticism not directed at regulations of factual statements.13See, e.g., Matal v. Tam, 582 U.S. 218, 223 (2017) (stating “a bedrock First Amendment principle: Speech may not be banned on the ground that it expresses ideas that offend.”); Snyder v. Phelps, 562 U.S. 443, 458 (2011) (“[I]n public debate we must tolerate insulting, and even outrageous, speech in order to provide adequate breathing space to the freedoms protected by the First Amendment.” (cleaned up) (quoting Boos v. Barry, 485 U.S. 312, 322 (1988))).

This isn’t to say that courts never uphold the constitutionality of per se bans on outrageous expression. They do. Along with obscenity law, the most notable example is the federal ban on morphed child sexual abuse material (CSAM), a lower-tech predecessor to deepfakes in which nonsexual images of identifiable children are edited to depict sexual conduct.14See infra Section III.A.2.c.

But in upholding such bans, courts do not always admit that they forbid outrageous expression per se. Rather, courts sometimes pretend that they forbid expression because it signifies particular historical facts. In other words, when evaluating outrageous imagery, courts pretend to be regulating records of historical fact when they are in fact regulating mere depictions of fictional events. In the language of semiotics, courts pretend to be analyzing indexical images when they are in fact analyzing iconic images.15See infra Section II.A.

Properly drafted and properly understood, anti-deepfake statutes are content-based restrictions on noncommercial speech that is not necessarily obscene; is not necessarily defamatory, nor fraudulent, nor even false; and discloses no private facts. They are the most recent manifestation of a longstanding impulse to ban outrageous visual representations.16See infra Section III.B.2.

This impulse motivates not only historical regulations of flag and effigy burning, but also bans on morphed CSAM and trademark dilution by tarnishment that remain in force today. Anti-deepfake laws are also the most recent manifestation of the law’s impulse to mischaracterize per se bans on outrageous expression as something they are not. Courts relied on the conflation of records and depictions to uphold bans on morphed CSAM, and scholars and legislators are reenacting this maneuver today for anti-deepfake laws.

But anti-deepfake laws offer none of the analytical offramps that other bans on outrageous expression offer. Courts considering morphed CSAM could expediently classify it as “child pornography,” which is categorically unprotected by the First Amendment, even though the Supreme Court’s rationale for that categorical exclusion justifies regulating only records of abuse and not mere depictions of abuse.17See infra Section III.A.2.

This approach is unlikely to work as well for deepfakes, because there is no categorical First Amendment exclusion for pornographic depictions of adults. Nor can deepfakes be forbidden on the ground that they disclose true, private facts, as revenge pornography does, or on the ground that they necessarily make false statements of fact.

Although they use the language of facts, anti-deepfake laws actually regulate fictional expression. These laws will force courts to consider the degree to which American law can ban outrageous iconography as such—something it has reliably done and continues to do today—even when jurists have to admit that this is what the law is doing. The internal tensions of anti-deepfake laws invite us to reconsider how hostile our constitutional order ought to be to the regulation of outrageous expression per se—and, indeed, how hostile it ever really has been.

Part I examines anti-deepfake laws to distill their essential characteristics. Although commentators frequently analogize anti-deepfake laws to defamation and revenge-porn bans, Part I identifies a critical distinction: Anti-deepfake laws regulate not statements of fact, but outrageous expression per se. In privacy-theory terms, the laws redress “appropriation,” a privacy harm that does not require the disclosure of true facts or the assertion of falsehoods. However, the statutes attempt to do so using infelicitous concepts of truth and falsity, which leads to unclear coverage.

Part II uses semiotic theory to explain how deepfakes differ from photographs and video recordings. Photographs are indexical: They record a visual phenomenon as it appeared through a particular lens at a particular moment in time. Deepfakes are iconic: They represent by resemblance. We interpret indexical media as assertions of fact; as a result, accurate photographs can reveal private matters and deceptive photographs can defame. But deepfakes are merely icons. They do not necessarily assert or record facts in the way that photographs do. As a result, the legal rationales historically invoked to regulate indexical imagery cannot support the full breadth of today’s anti-deepfake laws.

Part III tours trademark dilution and CSAM law, past prohibitions on flag desecration and effigy burning, and the criminalization (vel non) of sexual fantasy to show that anti-deepfake laws address a well-understood harm and have close cousins in past and present American legal doctrines. Part III also, however, shows that courts mischaracterize the semiotic status of this harm in order to sidestep First Amendment scrutiny: To uphold the regulation of icons as constitutional, courts invoke rationales that instead justify the regulation of indices.

Finally, Part IV explains that properly understanding the semiotics of deepfakes is essential to appropriate and effective regulation. Congress and the states have rushed to enact laws that address a deluge of photorealistic, AI-generated pornography. Our impulse to outlaw outrageous depictions per se will collide with our tendency to deny that we are doing so. We may try to equate deepfakes with photographs, but commandeering the law of photographs and video recordings to regulate AI-generated imagery will produce bizarre outcomes—like classifying any sexually explicit image generated by popular AI models as “child pornography” under federal law, even if the generated imagery depicts only adults.18See infra Section IV.A.

Properly regulating deepfakes requires acknowledging that they are icons, not indices, and employing the legal theories that regulate them as such: obscenity law and an extended version of the tort of appropriation.

I. The Internal Tension of Anti-Deepfake Laws

Legal discussion of deepfakes frequently links their harms to two familiar bodies of law. The first is the regulation of false and deceptive statements, like defamation and fraud.19The TAKE IT DOWN Act refers to deepfakes as “digital forger[ies],” as does the Cyber Civil Rights Initiative. Pub. L. No. 119-12, 139 Stat. 55, 55 (2025) (to be codified at 47 U.S.C. § 223(h)(1)(B)); Laws, Cyber Civil Rights Initiative, https://cybercivilrights.org/existing-laws [perma.cc/H56Q-AV9C]. See generally Abigail George, Note, Defamation in the Time of Deepfakes, 45 Colum. J. Gender & L. 122 (2024); Michael P. Goodyear, Dignity and Deepfakes, 57 Ariz. St. L.J. 931 (2025).

The second is the law of information privacy, particularly prohibitions on disseminating so-called “revenge porn”—nonconsensual, intimate photographs and video recordings.20Franks and Waldman, for example, make both comparisons: they assert both that deepfakes are “closely related to what is often colloquially referred to as ‘revenge porn’ ” and that deepfakes are “a form of deliberately deceptive speech.” Mary Anne Franks & Ari Ezra Waldman, Sex, Lies, and Videotape: Deep Fakes and Free Speech Delusions, 78 Md. L. Rev. 892, 893–94 (2019); see also Rebecca A. Delfino, Pornographic Deepfakes: The Case for Federal Criminalization of Revenge Porn’s Next Tragic Act, 88 Fordham L. Rev. 887, 897–98 (2019).

But studying anti-deepfake statutes reveals that they differ essentially from these supposedly close relatives. Actionable defamation and revenge porn necessarily communicate actual or purported facts about a victim. Legal liability for deepfakes, by contrast, seldom requires that the deepfake assert any facts whatsoever about a victim.

This Part examines the civil and criminal statutes that, as of July 24, 2025, forty-one states and the federal government have enacted specifically to regulate nonconsensual, pornographic deepfakes depicting adults.21See supra note 12.

It finds that, although anti-deepfake laws commonly limit their coverage to “false” media, “forger[ies],” and/or apparently “authentic” media, the laws, in practice, cover media irrespective of truth or falsity. In other words, the statutes aren’t drafted properly. Instead of conditioning liability on a statement’s truth or falsity, as their language sometimes implies, anti-deepfake laws actually target a statement’s offensiveness.22I use the words “offensiveness” and “outrageousness” to encompass the harm that a statement may cause irrespective of any precise, constative content we might assign to it. Cf. Judith Butler, Excitable Speech: A Politics of the Performative 57, 76–77 (1997).

What makes anti-deepfake laws infelicitous is that they mean to regulate media because of how it looks: They target highly offensive aesthetics. However, instead of defining the laws’ scope expressly in terms of offensiveness, legislatures define covered subject matter in terms of the facts it appears to communicate. They seek to remedy a harm rooted in offense using the language of harms rooted in true or false assertions. This is an unbridgeable gap.

This Part begins by identifying the aspects of anti-deepfake laws that demonstrate that their focus is not factual propositions, but outrageous aesthetics. It then highlights a number of statutory-interpretation problems in enacted laws that result from legislatures using the language of factual propositions to regulate the incommensurable harm of offense. It then identifies anti-deepfake laws’ proper theoretical grounding—the privacy harm known as appropriation of likeness—and distinguishes it from the defamation and information-privacy regulations that might seem to be anti-deepfake statutes’ closest cousins.

A. Anti-Deepfake Laws Regulate Fiction Using the Vocabulary of Facts

The core conduct that anti-deepfake statutes proscribe is the dissemination23Some statutes may also cover mere creation or possession of a deepfake, but the laws’ focus is on dissemination. See Kadri & West, supra note 12, at 12.

of a “realistic,”24See infra Section I.A.2.

sexually explicit25See, e.g., Idaho Code § 18-6606 (2024); Minn. Stat. § 604.32.2(2) (2024); N.Y. Civ. Rights Law § 52-c(2)(a) (McKinney 2024); Tex. Penal Code Ann. § 21.165(b) (West 2025).

depiction of an “identifiable” 26See, e.g., Ala. Code § 13A-6-240(b) (2024); Haw. Rev. Stat. § 711-1110.9(1)(c) (2021); 740 Ill. Comp. Stat. 190/10(a) (2024); Minn. Stat. § 604.32.2(2)–(3) (2024); N.Y. Penal Law § 245.15(1)(a) (McKinney 2023).

person without that person’s consent.27See, e.g., Minn. Stat. § 604.32.2(1) (2024); N.Y. Penal Law § 245.15.1(b) (McKinney 2023); Ala. Code § 13A-6-240(a)(2) (2024). Although other laws have been enacted to regulate deepfakes—such as by establishing a generalized “property” right in digital likenesses, see, e.g., Tenn. Code Ann. § 47-25-1101, -1103(a) (2024) , or banning certain types of AI-generated electioneering media, see, e.g., Minn. Stat. § 609.771 (2024)—I use the term “anti-deepfake laws” to refer specifically to laws that single out sexual deepfakes for special treatment. Similarly, when I use the word “deepfake” without further context, I am referring to a pornographic deepfake.

Anti-deepfake laws come in both civil and criminal variants. Available civil remedies include disgorgement, actual damages, statutory damages of up to $150,000 per deepfake, punitive damages, and attorney’s fees.28See, e.g., Cal. Civ. Code § 1708.86(e) (West 2025).

Criminal penalties include multiyear prison terms.29See, e.g., TAKE IT DOWN Act, Pub. L. No. 119-12, § 2(a)(2), 139 Stat. 55, 55–58 (2025) (to be codified at 47 U.S.C. § 223(h)(4)(A)).

Civil anti-deepfake laws may not require proof that a violator intended to harm the depicted individual; instead, they may require actual or constructive knowledge that the depicted person did not consent to the creation or disclosure of a deepfake.30See, e.g., Cal. Civ. Code § 1708.86(b) (West 2025); 740 Ill. Comp. Stat. 190/10(a) (2024); Minn. Stat. § 604.32.2(a)(1) (2024); N.Y. Civ. Rights Law § 52-c(2)(a) (McKinney 2024).

Many criminal laws, meanwhile, require proof of some additional intent or knowledge. Several laws require intent to cause harm to the victim,31Ga. Code Ann. § 16-11-90(a)(1), (b) (2022); Haw. Rev. Stat. § 711-1110.9 (2024); N.Y. Penal Law § 245.15 (McKinney 2023); Va. Code Ann. § 18.2-386.2(A) (2024).

while others impose liability when “intent to self-gratify” is present.32S.D. Codified Laws § 22-21-4(3)(d) (2024); see also, e.g., Wyo. Stat. Ann. § 6-4-306(b)(iii)(B) (2024); N.D. Cent. Code § 12.1-27.1-03.3(6) (2025).

Another group of criminal laws imposes lower requirements, such as proof that the offender knew or should have known that the media was a deepfake.33Fla. Stat. § 836.13(3) (2025) (“know[] or reasonably should have known that [the] visual depiction was an altered sexual depiction”); La. Stat. Ann. § 14:73.13(B)(1) (2025) (“knowledge that the material is a deepfake that depicts another person”). For slightly different requirements, see Utah Code Ann. § 76-5b-205(2)(a) (West 2025) (“know[] or should reasonably know would cause a reasonable person to suffer emotional or physical distress or harm”) and Ala. Code § 13A-6-240(a) (2024) (“knowing[]” distribution).

Most important for present purposes, however, are two provisions that delineate the types of communications that anti-deepfake laws regulate: First, the laws usually apply irrespective of whether a deepfake would deceive a reasonable observer into believing it to be a record of historical fact. Second, the laws often limit their coverage to depictions rendered in “realistic” styles, or depictions “indistinguishable from” “authentic” media.

These two provisions exist in some tension. The first establishes that the factual assertions in a deepfake are beside the point: The typical law regulates media irrespective of whether any reasonable observer would understand it as factual. Yet even as the laws treat deceptiveness as irrelevant, they reassert the importance of verisimilitude through provisions that limit coverage to depictions that resemble authentic photo and videographic records of historical fact. In other words, a covered deepfake must resemble an authentic record of historical fact, yet it is irrelevant whether it could actually pass for such a record.

Why would a typical anti-deepfake law simultaneously provide that (1) a deepfake’s tendency to deceive is immaterial and (2) only “realistic” deepfakes are covered? And in practical terms, what media does this language actually cover? On its own, each of these provisions makes sense. Recent outrage over deepfakes shows that certain realistic, sexualized depictions can cause especially grave harm to their subjects, and that this harm can occur whether or not the deepfakes are understood as documentary fact.34See infra notes 103–104 and accompanying text.

In tandem, however, the two provisions illustrate anti-deepfake laws’ equivocal conception of the harm they seek to redress: Are deepfakes harmful because they are false, or for another reason? This equivocation, in turn, illuminates a deeper fault line in United States’ regulation of privacy and dignitary harms.

1. “Falsity” Without a Deceptiveness Requirement

Some anti-deepfake laws purport to limit their coverage to “false” media.35 Ark. Code Ann. § 5-14-139(a)(1)(B) (2025); Del. Code Ann. tit. 10, § 7802 (2024); Ga. Code Ann. § 16-11-90(b)(1) (2022); N.H. Rev. Stat. Ann. § 644:9-a (2025); S.C. Code Ann. § 16-15-330 (2025). Even the legislative history of the revised TAKE IT DOWN Act recites an emphasis on “fals[ity].” See H.R. Rep. No. 119-82, at 2 (2025).

But closer reading shows that language of falsity is generally a red herring: Hardly any anti-deepfake laws seem to require that a deepfake could instill a false belief in a reasonable observer. In fact, many laws specify that disclaimers of falsity offer no defense. Laws in California, Florida, Illinois, New York, South Carolina, Tennessee, and Washington expressly provide for liability even where the deepfake contains a disclaimer stating it is unauthorized or that it does not depict the person’s actual behavior.36Cal. Civ. Code § 1708.86(d) (West 2025); Fla. Stat. § 836.13(6) (2025); 740 Ill. Comp. Stat. 190/10(c) (2024); N.Y. Civ. Rights Law § 52-c(2)(b) (McKinney 2024); S.C. Code Ann. § 16-15-330(1) (2025); Tenn. Code Ann. § 39-17-1906(c) (2024); Wash. Rev. Code § 7.110.025(3) (2025).

Under nearly all anti-deepfake laws, then, a pornographic deepfake’s actual or perceived correspondence with historical fact is irrelevant.37Almost always—but not always. Not every regulation of pornographic deepfakes treats disclaimers as irrelevant. Louisiana’s law, for example, specifically excludes from its scope “any material . . . that includes content, context, or a clear disclosure visible throughout the duration of the recording that would cause a reasonable person to understand that the audio or visual media is not a record of a real event.” La. Stat. Ann. § 14:73.13(C)(1) (2025); see also N.J. Stat. Ann. § 2C:21-17.8(g)(1) (West 2025) (exempting “any content that a reasonable viewer or listener would not believe to authentically depict speech or conduct”). By contrast, laws regulating deepfakes in elections typically extinguish liability when a disclaimer is present. See, e.g., Cal. Elec. Code § 20010(b) (West 2025); N.Y. Elec. Law § 14.106(5)(b) (McKinney 2025) (containing an amendment by a 2024 session law to require in “any political communication that was produced by or includes materially deceptive media” a disclosure stating, “This (image, video, or audio) has been manipulated”); Wash. Rev. Code § 42.62.020(4) (2025). At least one election-related anti-deepfake law has already been preliminarily enjoined as unconstitutional. Kohls v. Bonta, 752 F. Supp. 3d 1187 (E.D. Cal. 2024).

A deepfake can be unlawful even if no reasonable observer would understand it as a record of something the victim actually did: For example, imagine a deepfake that depicts fantastical events and bears a conspicuous, effective, and unquestionably true disclaimer, “FICTION: NOT AN AUTHENTIC RECORDING.” A deepfake can also be unlawful even if it faithfully depicts true events: Imagine a jilted partner who, by memory, uses AI to generate painstakingly accurate reenactments of sexual encounters with his ex. Neither of these hypothetical deepfakes is “false.”38Goodyear argues, “Where deepfakes purport to depict actual events, they are false.” Goodyear, supra note 19, at 998. However, like any other representational medium, deepfakes can truthfully portray actual events. Cf., e.g., Commonwealth v. Serge, 837 A.2d 1255, 1257 (Pa. Super. Ct. 2003) (reciting, as true, facts depicted in computer-generated animation of the prosecution’s theory of the case leading to conviction, which had been admitted as demonstrative evidence), aff’d, 896 A.2d 1170 (Pa. 2006); Serge, 896 A.2d at 1179–80 (describing process of authenticating “accurate” computer-generated demonstrative evidence). Deepfakes are necessarily false insofar as they purport not to be deepfakes—but many deepfakes do not represent themselves as anything other than deepfakes.

There is an argument that both are true, though it may be most accurate simply to say that they’re neither true nor false.39See John C.P. Goldberg & Benjamin C. Zipursky, A Tort for the Digital Age: False Light Invasion of Privacy Reconsidered, 73 DePaul L. Rev. 461, 482 (2024) (discussing “non-newsworthy statements that would be found highly offensive by a reasonable person and would be nonactionable as opinion in a defamation context because they are not provably true or provably false”).

Yet typical anti-deepfake laws treat them no differently from deceptively false deepfakes. This refusal to differentiate seems entirely appropriate; these nonfalse deepfakes can still cause grave harm. But it belies the language of forgery and falsity that appears in these laws.

2. Subject Matter and “Realism” Requirements

At the same time as they discount whether a reasonable observer would regard a deepfake as documentary record, many anti-deepfake statutes define covered deepfakes in terms of their resemblance to documentary records. For instance, anti-deepfake statutes generally focus on visual media, and many limit their coverage to videos and still images.40Cal. Civ. Code § 1708.86(a)(3)(A) (West 2025); N.Y. Civ. Rights Law § 52-c(3)(a) (McKinney 2024); N.Y. Penal Law § 245.15(1)(a) (McKinney 2023); Tex. Penal Code Ann. § 21.165 (West 2025); Va. Code Ann. § 18.2-386.2 (2024).

The broadest state laws cover both pictorial and aural representations.41See, e.g., La. Stat. Ann. § 14:73.13 (2025); Minn. Stat. 604.32(1)(b) (2024).

No anti-deepfake law expressly covers written or spoken words, even though words alone can falsely portray an identifiable person engaging in sexual activity.42Cf., e.g., James v. Gannett Co., 353 N.E.2d 834, 837 (N.Y. 1976) (“It is old law that written charges imputing unchaste conduct to a woman are libelous per se . . . .”). Amy Adler notes that disfavor for images is a durable feature of obscenity and pornography law. See generally Amy Adler, The First Amendment and the Second Commandment, in Law, Culture and Visual Studies 161 (Anne Wagner & Richard K. Sherwin eds., 2014).

The statutes’ core coverage is photorealistic images and videos—although the language they use to articulate this coverage is often clumsy and confusing.

Given that the typical statute treats truthfulness or falsity as irrelevant, the most bewildering aspects of anti-deepfake laws are their requirements concerning how covered media must look. Anti-deepfake laws often expressly limit coverage to material that is “realistic,”43See, e.g., Cal. Civ. Code § 1708.86(a)(6) (West 2025); Fla. Stat. § 836.13(1)(a) (2025); Minn. Stat. § 604.32.1(b)(1) (2024); N.Y. Civ. Rights Law § 52-c(1)(b) (McKinney 2024); N.Y. Penal Law § 245.15(2)(d) (McKinney 2023); S.D. Codified Laws § 22-21-4(3)(a) (2024).

but they seldom define “realism.”44One exception is Nevada’s law, which refers specifically to “photorealis[m].” Nev. Rev. Stat. § 200.770 (2025).

Moreover, the scope of the typical law frustrates one obvious interpretation: It forecloses equating realism with observers’ tendency to perceive media as factual. “Realistic” must mean something different from “deceptive,” because anti-deepfake laws typically require no proof of deception and often specify that a violation can occur even when the media contains a disclaimer that it is false.45Cal. Civ. Code § 1708.86(d) (West 2025); Fla. Stat. § 836.13(6) (2025); N.Y. Civ. Rights Law § 52-c(2)(b) (McKinney 2024).

What anti-deepfake laws are targeting is not the assertion of particular truths or falsehoods, but the use of particular aesthetics. “Realism” is a style, and what constitutes realism varies depending on cultural and historical context.46Rebecca Tushnet, Worth a Thousand Words: The Images of Copyright, 125 Harv. L. Rev. 683, 724 (2012); Benjamin L.W. Sobel, Elements of Style: Copyright, Similarity, and Generative AI, 38 Harv. J.L. & Tech. 49, 84–86 (2024).

Some statutes, like those in Louisiana, Massachusetts, Pennsylvania, and South Dakota, require a sort of realism that would make the media appear “authentic” to a reasonable observer.47La. Stat. Ann. § 14:73.13(C)(1) (2025); Mass. Gen. Laws ch. 265, § 43A(b)(1) (2024); 18 Pa. Cons. Stat. § 3131 (2025); S.D. Codified Laws § 22-21-4(3)(a) (2024). So did the proposed DEFIANCE Act. Disrupt Explicit Forged Images and Non-Consensual Edits Act of 2024, S. 3696, 118th Cong. § (3)(a)(3)(A) (as amended by S. Amend. 3049 and passed by Senate, July 23, 2024) [hereinafter DEFIANCE Act of 2024].

Louisiana’s law refers specifically to resemblance to “authentic” media that “record . . . the actual speech or conduct of the individual” depicted.48La. Stat. Ann. § 14:73.13(C)(1) (2025).

Here, “authentic” denotes documentary media, like photographs and video recordings. Unlike other forms of pictorial representation, which merely depict events, documentary media actually record real-life events. 49Compare Depict, Oxford Eng. Dictionary, https://doi.org/10.1093/OED/2187623999 (“to portray, delineate, figure anyhow”), with Record, Oxford Eng. Dictionary, https://doi.org/10.1093/OED/1987418679 (“[t]o convert (sounds, images, a broadcast, etc.) into permanent form . . . chiefly using magnetic tape or digital electronic techniques”).

As I use these words, to depict an event is to represent it pictorially, whereas to record an event is to capture contemporaneous evidence of its occurrence. 50Part II, infra, explains this distinction more precisely using semiotic terminology.

A record may be, but is not necessarily, a depiction, and vice versa.

In the sense that anti-deepfake statutes use the word, an “authentic” photograph is an image that is actually a photograph.51Although one might also ask whether an oil painting is “authentic,” this question connotes whether it was painted by a particular artist, not whether it is actually an oil painting.

Statutes that use the word “authentic” almost certainly require photorealism, “the quality in art . . . of depicting or seeming to depict real people . . . with the exactness of a photograph.”52Photorealism, Merriam-Webster, https://merriam-webster.com/dictionary/photorealism [perma.cc/4ZAZ-55PZ]. Nevada’s law makes this explicit. Nev. Rev. Stat. § 200.770 (2025).

A photorealistic image is one rendered in a style of realism that resembles an “authentic” photograph or video recording. Statutes that limit their coverage to “recording[s] of an individual”53Ala. Code § 13A-6-240(b) (2024).

or media “substantially derivative” of video recordings and photographs54Minn. Stat. § 604.32.1(b) (2024). Minnesota’s statute also includes “electronic image[s],” but because this term is enumerated in a list of otherwise documentary media, it probably does not encompass nondocumentary images. See Yates v. United States, 574 U.S. 528, 543 (2015) (describing “the principle of noscitur a sociis”).

are probably also meant to capture photorealistic media.

Other anti-deepfake laws, however, use far broader language. Florida’s law, for example, covers “any visual depiction that . . . depicts a realistic version of an identifiable person” nude or engaged in sexual conduct. 55Fla. Stat. § 836.13(1)(a) (2025).

Indiana’s criminal statute covers a “digital image . . . or video . . . that is of a quality, characteristic, or condition such that it appears to depict the alleged victim,” and several other states employ similar language.56Ind. Code § 35-45-4-8(c)(3) (2025); see also, e.g., Utah Code Ann. § 76-5b-205(1)(a)(ii) (West 2025); Me. Rev. Stat. Ann. tit. 17-A, § 511-A (2025) (“appears to show”); Mont. Code Ann. § 45-5-640(5)(b) (West 2025).

These laws are probably intended to regulate photorealistic, AI-generated media rather than pictorial depictions in general.57For instance, the “sponsor’s statement of intent” accompanying a Texas bill specifically mentions “artificial intelligence.” Tex. Senate, Bill Analysis, S. 88, 1st Sess., at 1 (2023), https://capitol.texas.gov/tlodocs/88R/analysis/html/SB01361F.htm [perma.cc/T5VW-XFRT]. Similarly, a legislative report on Florida’s statute frames the bill as a response to “technology advancing at a rapid rate.” Fla. Senate, Bill Analysis and Fiscal Impact Statement, 2022 Reg. Sess., at 2 (2022).

Yet the text might encompass depictions of all sorts.58For example, read literally, a Texas anti-deepfake statute from 2023 to 2025 encompassed videos of fictionalized theatre or puppetry performances, as these media can “depict a real person . . . performing an action that did not occur . . . .” Tex. Penal Code Ann. § 21.165(a)(1) (West 2025). Both the musical Hamilton and the marionette movie Team America: World Police depict real people—Alexander Hamilton and Kim Jong-Il, respectively—performing musical numbers that, to the best of my knowledge, they never performed.

3. “Falsity” and “Realism” Puzzles in the TAKE IT DOWN Act

Prodding at the language and the logic of anti-deepfake statutes reveals that they have been drafted in a manner that clashes with their goals. The statutes’ language suggests that their focus is on whether a deepfake can be distinguished from authentic media. Yet their practical scope includes all deepfakes of a particular aesthetic, irrespective of whether a reasonable observer could distinguish them from authentic media. The statutes require apparent “authenticity” while simultaneously discounting whether any reasonable viewer would take a deepfake to be authentic.

As an illustration, consider the federal TAKE IT DOWN Act, introduced by Senator Ted Cruz in June 2024 and enacted in significantly modified form in May 2025.59Tools to Address Known Exploitation by Immobilizing Technological Deepfakes on Websites and Networks Act, S. 4569, 118th Cong. (2024) [hereinafter TAKE IT DOWN Act First Draft]; Pub. L. No. 119-12, 139 Stat. 55 (2025).

The first draft’s vague language exemplifies problems that appear in enacted state legislation. And although the enacted TAKE IT DOWN Act improves upon the first draft, the new federal law still contains a crucial ambiguity that illustrates its underdeveloped conception of harm: Are deepfakes regulated because of the facts they assert, or because of the way they look?

a. The First Bill’s Illustrative Shortcomings

The first draft of the TAKE IT DOWN Act provided that to be covered, a deepfake must “falsely depict an individual’s appearance or conduct.”60TAKE IT DOWN Act First Draft § 2(a)(2).

It further specified, “an individual appears in an intimate visual depiction if . . . the individual is actually the individual identified in the intimate visual depiction; or . . . a deepfake of the individual is used to realistically depict the individual such that a reasonable person would believe the individual is actually depicted in the intimate visual depiction.”61Id. (numbering omitted).

Inspecting these provisions reveals that they make no sense. Start with “falsely.” Is a “false[] depict[ion]” simply any ahistorical depiction? Or is it only a depiction that can reasonably be interpreted as asserting historical facts about a person? Many expressive works defy a true/false binary. Are Monet’s haystack paintings true or false? The question is nonsensical: As Rebecca Tushnet observes, “[visual] styles are neither true nor false.”62Tushnet, supra note 46, at 724.

Star Wars is not a documentary, but it is fiction rather than falsehood. Falsity denotes being “[c]ontrary to what is true, erroneous,” or outright “mendacious.”63False, Oxford Eng. Dictionary, https://doi.org/10.1093/OED/8506717465 (emphasis added).

If we don’t interpret media to be reporting historical facts, then it isn’t false—even if it isn’t true, either.64See Marc A. Franklin, Fiction, Libel, and the First Amendment, 51 Brook. L. Rev. 269, 273 (1985) (“[V]irtually the entire range of poetic and prose fiction would occupy this middle ground between truth and falsity. Language that does not purport to be reportorial is not automatically to be deprecated as ‘false.’ ”); cf. Richard A. Posner, Law and Literature 515 (3d ed. 2009) (“[O]ne of the adjustments we make in reading a work as literature rather than as history or sociology is generally to ignore issues of factuality . . . .”).

Limiting coverage to false depictions would just establish a takedown regime for certain types of defamation: It could cover images of libelous oil paintings—which are indeed “false[] depict[ions]”—but not photorealistic deepfakes whose content and/or context clearly communicate that they are fictional.

What about the proposed provision that an “individual appears in an intimate visual depiction . . . if the individual is actually the individual identified in” it?65TAKE IT DOWN Act First Draft § 2(a)(2) (emphasis added).

Read literally, this text is almost meaningless. Being “actually the individual identified in [an] intimate visual depiction” was probably meant to cover situations like revenge porn, in which the victim “is actually the individual” recorded in the media, and which the initial bill largely equated with deepfakes. But being recorded is distinct from being identified. As a result, it’s unclear what the “actually” in “actually . . . identified” means.66What’s more, one is “actually . . . identified” even when one is misidentified; the bill did not specify that the identification had to be correct.

A person identified in surveillance footage of a bank robbery and a person identified in an oil painting of a bank robbery is, in both cases, “actually” the person identified in the material in question. But only the surveillance footage directly evidences the individual’s participation in the robbery, because it is a record rather than a depiction.

Equally confusing was the bill’s provision that an individual “appears in an intimate visual depiction” if she “is . . . realistically depict[ed] . . . such that a reasonable person would believe [she] is actually depicted.”67TAKE IT DOWN Act First Draft § 2(a)(2).

On the narrowest plausible reading, the definition requires that a reasonable person actually would believe that the deepfake is an authentic photograph or video recording of the person it depicts. Reading the bill in this way makes the deepfake’s content and context especially significant: If the deepfake contains a disclaimer that it is a deepfake, or if the deepfake depicts fantastical conduct that could never take place in real life, then it may not be reasonable for an observer to believe that the deepfake depicts an individual’s speech or conduct as that speech or conduct truly occurred.

A broader reading of the first draft would be that it required a deepfake to be photorealistic but not necessarily misleading. So construed, the law would have covered even deepfakes that are so obviously fake that no reasonable observer could mistake them for authentic photographs or videos, as long as they are rendered in a photorealistic style. This is the best reading of the numerous statutes that require realism while simultaneously providing that disclaimers of falsity are no defense to liability. Unhelpfully, however, neither the first draft nor the enacted TAKE IT DOWN Act expressly addresses whether a disclaimer of falsity affects liability.

Finally, the broadest plausible reading of this language is that it required only that the depicted individual be identifiable from the deepfake, regardless of its realism. The first draft required only “that a reasonable person would believe the individual is actually depicted,” not that a reasonable observer would believe that the individual is actually recorded. Both the surveillance footage of the bank robbery and the oil painting of the bank robbery depict the robber. But only the footage records the robber.68Other statutes acknowledge this difference: Louisiana’s, for example, defines deepfakes as “falsely appear[ing] to a reasonable observer to be an authentic record of the actual speech or conduct of the individual . . . .” La. Stat. Ann. § 14:73.13(c)(1) (2025) (emphasis added).

This means that the broadest reading of the first draft would require only that a reasonable observer would recognize that a specific individual is depicted in the deepfake, thus covering cartoons, flipbooks, or any other nonphotorealistic pictorial medium capable of presentation in a still or video image.

The TAKE IT DOWN Act was significantly revised before its passage, but its original shortcomings remain illustrative, as similar errors appear in enacted state legislation. Numerous state statutes, for example, contain the problematic “appears to depict” language.69Del. Code Ann. tit. 10, § 7802 (2024); Ind. Code § 35-45-4-8(c)(3) (2025); Mont. Code Ann. § 45-5-640(5)(b) (West 2025); Utah Code Ann. § 76-5b-205(1)(a)(ii) (West 2025); see also Ark. Code Ann. § 5-14-139(b)(2) (2025) (“an ordinary person . . . would conclude that the depiction is of the identifiable person”); N.C. Gen. Stat. § 14-190.5A(a)(2) (2025) (“a reasonable person would believe the image depicts an identifiable individual”); Wyo. Stat. Ann. § 6-4-306 (2024) (“purports to represent an identifiable person”).

Others define covered deepfakes as “false” despite omitting any requirement that they be understandable as assertions of fact.70See supra note 35.

Finally, many state laws attempt to treat deepfakes and revenge porn identically, despite fundamental ontological differences.71See, e.g., Cal. Civ. Code § 1708.86 (West 2025); Colo. Rev. Stat. § 18-7-107 (2025); Ga. Code Ann. § 16-11-90 (2022); Haw. Rev. Stat. § 711-1110.9 (2024); N.C. Gen. Stat. § 14-190.5A (2025); Neb. Rev. Stat. § 25-3502(2) (2025); Va. Code Ann. § 18.2-386.2 (2024); Vt. Stat. Ann. tit. 13, § 2606 (2025). As Kadri and West note, treating deepfakes and revenge porn alike leads to infelicities. See Kadri & West, supra note 12, at 17 (“[T]raditional revenge porn statutes often draw lines between private and nonprivate situations by including exceptions for images taken ‘in public’ or without ‘reasonable expectation of privacy.’ This concept obviously translates poorly to deepfakes, where the depicted conduct never occurred and no ‘private’ moments exist.”).

b. The Unresolved Problem of “Indistinguishability”

The enacted TAKE IT DOWN Act improves upon the first draft. The modified law removes the incoherent language about “actual[] depict[ion]” and all mentions of the ambiguous word “false.” Instead, the enacted statute defines a covered “digital forgery” as “any intimate visual depiction of an identifiable individual . . . that, when viewed as a whole by a reasonable person, is indistinguishable from an authentic visual depiction of the individual.”72Pub. L. No. 119-12, § 2(a)(2), 139 Stat. 55, 55 (2025) (to be codified at 47 U.S.C. § 223(h)(1)(B)).

The statute also addresses offenses involving “digital forgeries” in a separate section from offenses involving documentary revenge porn, while the first draft grouped them together.73Compare id., with TAKE IT DOWN Act First Draft § 2(a)(2).

However, the enacted law still contains a crucial ambiguity: Does its definition of “digital forgery” refer to a deepfake’s aesthetic appearance or its propositional content? There are two different ways that a deepfake might be “indistinguishable from an authentic visual depiction”: It might be aesthetically indistinguishable or propositionally indistinguishable. If a deepfake need only be aesthetically indistinguishable, then it need only be photorealistic, even if its content or context make it obvious that it is not authentic.

Alternatively, the statute could require a deepfake to be propositionally indistinguishable from an authentic photograph or video recording, covering only deepfakes capable of convincing a reasonable observer that they are authentic records, or that they otherwise state documentary facts.74The TAKE IT DOWN Act is not the first regulation of sexual imagery to employ unclear language of “indistinguishability.” The Child Pornography Prevention Act of 1996 (CPPA) defined “child pornography” to include images that “appear[] to be[] of a minor engaging in sexually explicit conduct,” a phrase that the legislative history described as covering media “virtually indistinguishable to unsuspecting viewers from unretouched photographs of actual children engaging in identical sexual conduct.’ ” Pub. L. No. 104-208, § 121(2)(4), 110 Stat. 3009, 3009–28 (1996); United States v. Hilton, 167 F.3d 61, 72 (1st Cir. 1999) (quoting S. Rep. No. 104-358, pt. I, at 7). This left unclear whether the standard was based on aesthetic realism or on what a viewer believed the image recorded. The Supreme Court struck down the CPPA’s “appears to be” language, and Congress amended the law to cover images “indistinguishable from” an actual minor, defining this as what “an ordinary person viewing the depiction would conclude” depicts an actual minor, but excluding cartoons, drawings, and the like. See infra note 205; 18 U.S.C. § 2256(8)(B), (11); Pub. L. No. 108-21, § 502(c), 117 Stat. 650, 679 (2003). This, too, does not definitively resolve whether the test is aesthetic or propositional. In connection with a different statutory provision, the Supreme Court has stated that “simulated” sexual intercourse requires “caus[ing] a reasonable viewer to believe” actual minors engaged in sexual conduct, as opposed to fictional scenes. United States v. Williams, 553 U.S. 285, 296–97 (2008); see also 18 U.S.C. § 2252A(a)(3)(B)(ii); 18 U.S.C. § 2256(2).

The statutory text seems to point to the latter, as it specifies that a “digital forgery” is a depiction that “when viewed as a whole by a reasonable person, is indistinguishable from an authentic visual depiction.”75Pub. L. No. 119-12, § 2(a)(2), 139 Stat. 55, 55 (2025) (to be codified at 47 U.S.C. § 223(h)(1)(B)) (emphasis added).

When a reasonable person views a communication “as a whole,” that person presumably pays attention not only to its aesthetic appearance, but also to the content it depicts and the context in which it is presented.76At least, this is how courts in defamation cases analyze whether a communication “as a whole” is capable of defamatory meaning. Farah v. Esquire Mag., 736 F.3d 528, 535 (D.C. Cir. 2013) (quoting Afro-Am. Publ’g Co. v. Jaffe, 366 F.2d 649, 655 (D.C. Cir. 1966) (en banc)). To analyze statements as a whole, courts look to the context of the publication, which “includes not only the immediate context of the disputed statements, but also the type of publication, the genre of writing, and the publication’s history of similar works.” Id.

In many instances, attention to content and context will put a reasonable person on notice that a photorealistic deepfake is an AI-generated depiction rather than an authentic documentary record.

Yet a propositional-indistinguishability requirement clashes with the TAKE IT DOWN Act’s evident purpose. In addition to its criminal prohibitions on publishing deepfakes, the law requires covered online platforms to establish a notice-and-takedown regime for intimate visual depictions.77Pub. L. No. 119-12, § 3, 139 Stat. 55 (2025) (to be codified at 47 U.S.C. § 223a).

The law expressly states that covered platforms include online services that regularly “publish, curate, host, or make available content of nonconsensual intimate visual depictions.”78Id. at § 4 (to be codified at 47 U.S.C. § 223a). The legislation already appears to have discouraged such sites from operating: Shortly after the bill passed both chambers of Congress, a “[m]ajor deepfake porn site” called MrDeepFakes shut down. Alana Wise, Major Deepfake Porn Site Shuts Down, NPR (May 6, 2025), https://npr.org/2025/05/06/nx-s1-5388422/mr-deepfakes-porn-site-ai-shut-down [perma.cc/Y5FC-AGZ2]. Some scholars have noted uncertainty about whether the statute’s takedown regime covers deepfakes. See James Grimmelmann, Deconstructing the Take It Down Act, 68 Commc’ns of the ACM 28, 29–30 (2025); Renée DiResta, Mary Anne Franks, Becca Branum, Adam Conner & Jen Patja, Lawfare Daily: Digital Forgeries, Real Felonies: Inside the TAKE IT DOWN Act, Lawfare at 22:58–23:23 (May 6, 2025), https://lawfaremedia.org/article/lawfare-daily–digital-forgeries–real-felonies–inside-the-take-it-down-act [perma.cc/ZWA9-CD63]. While the statute could be clearer, there remains a strong argument that its takedown regime encompasses deepfakes: The law defines a “digital forgery” as a specific type of “intimate visual depiction,” Pub. L. No. 119-12, § 2(a)(2), 139 Stat. 55, 55 (2025) (to be codified at 47 U.S.C. § 223(h)(1)(B)), and it defines “intimate visual depiction” by cross-reference to the term’s definition in a 2022 statute, codified at 15 U.S.C. § 6851, that provides a private right of action for nonconsensual disclosures of intimate visual depictions, id. (to be codified at 47 U.S.C. § 223(h)(1)(E)). The National Association of Attorneys General recently noted that § 6851’s “application to digital forgeries is currently unsettled.” David Leibert, Congress’s Attempt to Criminalize Nonconsensual Intimate Imagery: The Benefits and Potential Shortcomings of the TAKE IT DOWN Act, Nat’l Ass’n of Att’ys Gen. (Aug. 26, 2025), https://www.naag.org/attorney-general-journal/congresss-attempt-to-criminalize-nonconsensual-intimate-imagery-the-benefits-and-potential-shortcomings-of-the-take-it-down-act/ [perma.cc/JJS9-DKBT]. For what it is worth, § 6851 defines “visual depiction” with a cross-reference to a third statute, which defines the term without reference to authenticity, see 18 U.S.C. § 2256(5), and that same statute expressly contemplates that a “visual depiction” may not be an authentic photograph and may instead be a computer-generated image that does not record historical fact, see 18 U.S.C. § 2256(8)(C); infra Section III.A.2.c (discussing § 2256(8)(C)).

It is intuitively obvious why the TAKE IT DOWN Act—a statute designed to limit the proliferation of nonconsensual deepfakes—would specifically target websites whose raison d’être is promulgating nonconsensual deepfakes. But this intuition depends to some degree on reading the law to target aesthetic indistinguishability rather than propositional indistinguishability. Of the places a deepfake might appear, a website that primarily or exclusively hosts deepfakes is among the least likely to sow confusion about factual propositions. A reasonable person viewing a deepfake “as a whole” on a site overtly dedicated to deepfakes will very probably distinguish it “from an authentic visual depiction of the individual.”79TAKE IT DOWN Act, Pub. L. No. 119-12, § 2(a)(2), 139 Stat. 55, 55 (2025) (to be codified at 47 U.S.C. § 223(h)(1)(B)). Again, the significance of a publication’s context is something defamation doctrine recognizes. See, e.g., Farah, 736 F.3d at 537 (noting that the “primary intended audience” of an allegedly defamatory communication on a blog “would have been familiar with [defendant]’s history of publishing satirical stories”); see also Quentin J. Ullrich, Note, Is This Video Real? The Principal Mischief of Deepfakes and How the Lanham Act Can Address It, 55 Colum. J.L. & Soc. Probs. 1, 8 (2021) (acknowledging that “[v]iewers of videos on sites like MrDeepFakes almost certainly know they are fake”); supra note 76.

Thus, the law’s specific enumeration of nonconsensual-intimate-image sites suggests an intent to target aesthetics rather than factual assertions.

Other anti-deepfake laws more clearly communicate an aesthetic indistinguishability requirement.80Still other laws, however, evince even greater ontological confusion. Maryland’s law refers to “an image . . . that is indistinguishable from the person.” Md. Code Ann., Crim. Law § 3-809(a)(6)(i)(2) (West 2025). Representations of people are not people, and almost always, they are exceedingly easy to distinguish from actual, embodied people. Moreover, a three-dimensional dummy truly indistinguishable from a person would be excluded by that same law’s carveout for “sculpture.” Id. § 3-809(a)(6)(iii)(3). For the same drafting gaffe, see Tex. Civ. Prac. & Rem. Code Ann. § 98B.001 (West 2025).

Take Texas’s 2025 revisions to its criminal statute, which replace a definition of a deepfake as “a video, created with the intent to deceive, that appears to depict a real person performing an action that did not occur in reality,” with the definition, “ ‘Deep fake media’ means a visual depiction . . . that appears to a reasonable person to depict a real person, indistinguishable from an authentic visual depiction of the real person.”81Act of June 20, 2025, ch. 1133, sec. 2, § 21.165(a)(1), 2025 Tex Sess. Law Serv. at Ch. 1133 (West) (codified as amended at Tex. Pen. Code Ann. § 21.165 (2025)).

The revisions also state that it is not a defense if the deepfake is labeled or otherwise indicated to be inauthentic.82Id. sec. 3, § 21.165(c)(c-2)(2).

Similarly, South Carolina’s law covers imagery “that appears to a reasonable person to be indistinguishable from an authentic visual depiction . . . regardless of whether the visual depiction indicates . . . that [it] is not authentic.”83 S.C. Code Ann. § 16-15-330(1) (2025).

The Texas and South Carolina statutes are arguably internally inconsistent—they cover depictions “indistinguishable from . . . authentic” records even when observers can easily distinguish them—and they are certainly inconsistent with a propositional-indistinguishability standard. What harmonizes their provisions is reading “indistinguishable” as an aesthetic requirement: If a deepfake looks like an authentic photograph or video recording, it can constitute a violation even if it is in practice entirely distinguishable.

Anti-deepfake laws target outrageous aesthetics, not false propositions. They remedy a harm that stems from an outrageous style of fictional depiction, whether or not a reasonable observer would take the depiction to assert historical facts about the person depicted. Yet the laws employ a vocabulary better suited to regulating the communication of facts. Understanding why the laws do so, and what language better captures their theory of harm, requires excavating the theory of privacy that justifies anti-deepfake statutes.

B. Anti-Deepfake Laws’ Theoretical Scaffolding

Anti-deepfake laws are hard to interpret because, perhaps inadvertently, their drafters legislated right on top of a legal fault line. One can divide the harms redressed by American privacy law and dignitary torts into two discrete categories: harms that derive from disclosures of fact and harms that do not. Defamation, for example, falls into the former category, as it concerns false statements of fact. Contemporary privacy-and-technology discourse, too, tends to focus on factual disclosures. Specifically, it focuses on what I’ll call “information privacy”—the collection, transmission, and use of actual or purported facts about persons.84See, e.g., Danielle Keats Citron & Daniel J. Solove, Privacy Harms, 102 B.U. L. Rev. 793, 810 (2022) (noting that the privacy torts do not address “modern privacy problems involving the collection, use, and disclosure of personal data” and therefore “have little application to contemporary privacy issues”); Paul Ohm, Broken Promises of Privacy: Responding to the Surprising Failure of Anonymization, 57 UCLA L. Rev. 1701, 1705, 1728, 1760 (2010) (characterizing privacy harm as the result of “connecting individuals to harmful facts” about them).

Information-privacy harms result from improper disclosures of personally identifiable facts like someone’s financial information or HIV status.85See, e.g., Shah v. Cap. One Fin. Corp., 768 F. Supp. 3d 1033, 1044–45 (N.D. Cal. 2025) (permitting claim under California Consumer Privacy Act to proceed based on alleged disclosures of “personal and financial information, including employment information, bank account information, citizenship status, and credit card preapproval or eligibility”); N.Y. Pub. Health Law §§ 2780, 2782 (McKinney 2025) (protecting confidentiality of HIV status).

Like defamation, information privacy regulates statements of purported facts about persons.

In parallel, however, runs a current of privacy doctrine unconcerned with factual representations. Among other things, this body of law redresses injuries caused by appropriation—the dignitary harm wrought by having one’s likeness used for the purposes of another.86See infra Section I.B.3.a.

Appropriative harm can occur even when no private facts are revealed and no falsehoods are asserted. It is appropriative harm that anti-deepfake laws address. The violation that the statutes remedy is a highly offensive87“Highly offensive” is a term of art discussed infra Section I.B.3.b.

use of an individual’s likeness, whether or not that use asserts any falsehoods or discloses any true, private facts about the victim.

The problem is that, as Part I.A showed, legislation and scholarship on deepfakes frequently approach an appropriative harm as if it were an information-privacy harm. The mismatch produces an internal tension. In practice, anti-deepfake statutes almost always treat a deepfake’s propositional content as irrelevant. Only a small minority of statutes require a deepfake to be capable of deceiving a reasonable viewer; many provide that disclaimers of inauthenticity are irrelevant. At the same time, however, the statutes describe regulated subject matter in terms of its apparent authenticity. Legislatures, perhaps wary of explicitly regulating offensive aesthetics as such, have tried to couch an aesthetic prohibition in the seemingly safer vocabulary of information privacy. Yet the principle that best explains the statutes’ coverage is not whether the depiction is indistinguishable from an authentic record nor whether the depiction is false—indeed, actionable deepfakes may defy either criterion88See supra Section I.A.1.

—but rather whether the depiction is “highly offensive.”

Identifying anti-deepfake laws’ theoretical pedigree is important because it informs both how these statutes should be written and how they should be read. Recognizing that harmful deepfakes are highly offensive appropriations of likeness reveals respects in which some statutes are too narrow. Laws that extinguish liability in the presence of a disclaimer misdiagnose an appropriative harm as a defamatory harm.89See Ariz. Rev. Stat. Ann. § 16-1023(A)(2) (2025); La. Stat. Ann. § 14:73.13(C)(1) (2025).

By contrast, laws that ban “depiction[s]” without limitation to highly offensive representational styles are unduly broad restrictions on fictional expression.90See, e.g., Fla. Stat. § 836.13 (2025).

An imprecise identification of the harm to which they respond leaves some anti-deepfake statutes conceptually incoherent. The following subsections unpack the potential justifications for anti-deepfake laws. Because the harms of deepfakes are so frequently mischaracterized as injuries that stem from deceptive assertions of fact, legislators mistakenly attempt to target the problem by regulating “false” imagery or by equating them with information-privacy violations like revenge porn. But anti-deepfake laws are neither defamation laws nor information-privacy laws because they eschew any requirement that the media in question disclose facts about the person depicted. Statutes that regulate pornographic deepfakes by targeting assertions of fact will never address what’s most objectionable about them, which is neither falsity nor truth, but their highly offensive appropriation of a nonconsenting person’s likeness.

1. Anti-Deepfake Laws Are Not (Just) Defamation Laws

Some legislative history justifies anti-deepfake laws by referring to viewers’ inability to differentiate deepfakes from factual records, which suggests concerns about misleading statements of fact.91See, e.g., Michelle Hinchey, Sponsor Memorandum in Support of N.Y. S.B. S1042A, 2023–2024 Sess., https://nysenate.gov/legislation/bills/2023/S1042/amendment/A [perma.cc/Z6AN-54FG] (“As this technology improves, . . . it becomes nearly impossible to depict what is a real image and what is doctored.”); Hearing on SHB 1999 Before the Senate S. L. & Just. Comm., 2024 Leg. Sess. at 42:26 (Wash. 2024) (remarks of Rep. Tina Orwall), https://tvw.org/video/senate-law-justice-2024021275/?eventID=2024021275 [perma.cc/9MU4-3PS8] (“The bill in front of you is really about the fabricated images . . . . [T]hey’re just as harmful, right? Someone cannot distinguish.”). See generally Marc Jonathan Blitz, Deepfakes and Other Non-Testimonial Falsehoods: When Is Belief Manipulation (Not) First Amendment Speech?, 23 Yale J.L. & Tech. 160 (2020) (characterizing the harms of deepfakes as related to falsity).

Similarly, legislation may refer to deepfakes as “digital forger[ies]” or imply that they entail “fraud”—words that presuppose an intent to deceive viewers about a factual proposition.92See, e.g., 47 U.S.C. § 223(h)(B); see also Fraud, Black’s Law Dictionary (12th ed. 2024) (“[a] knowing misrepresentation or knowing concealment of a material fact made to induce another to act to his or her detriment”); Forgery, Black’s Law Dictionary (12th ed. 2024) (“[t]he act of fraudulently making a false document or altering a real one to be used as if genuine”).

But a common characteristic of many anti-deepfake laws reveals that these statutes seek to remedy a wrong distinct from harmful falsehood. Several laws and federal bills explicitly state that a violator cannot avoid liability by including a disclaimer that states that a deepfake does not record factual conduct on the part of the person depicted.93See supra Section I.A.1.

At least one state that initially required a deepfake be “created with the intent to deceive”94 Tex. Penal Code Ann. § 21.165(a)(1) (West 2025).

later amended the law, removing the intent requirement and specifying that a disclaimer of inauthenticity could not serve as a defense.95Act of June 20, 2025, ch. 1133, sec. 2, § 21.165(c-2), 2025 Tex Sess. Law Serv. at Ch. 1133 (West) (codified as amended at Tex. Pen. Code Ann. § 21.165 (2025)).

Provisions like these are inconsistent with the theory that the harmfulness of deepfakes comes solely from their ability to defame, deceive, and defraud.

The Supreme Court’s defamation jurisprudence teaches that “statements that cannot ‘reasonably be interpreted as stating actual facts’ about an individual” are protected by the First Amendment, at least when they pertain to matters of public concern.96Milkovich v. Lorain J. Co., 497 U.S. 1, 20 (1990) (quoting Hustler Mag., Inc. v. Falwell, 485 U.S. 46, 50 (1988)).

This requirement reflects that a “false representation of fact” is an essential element of defamation.97Pring v. Penthouse Int’l, Ltd., 695 F.2d 438, 440 (10th Cir. 1982).

As the Court has explained, “[u]nder the First Amendment there is no such thing as a false idea.”98Gertz v. Robert Welch, Inc., 418 U.S. 323, 339–40 (1974).

On this understanding, an expressive statement that does not assert or imply a factual proposition cannot be actionably99For a discussion of the distinction between defamation and actionable defamation, see Jeffrey S. Helmreich, True Defamation, 4 J. Free Speech L. 835, 840–46 (2024) (noting that defamation may include truthful speech).

defamatory.100The Restatement’s definition of defamation corroborates this view, although as a constitutional matter it remains an “open issue” whether “statements not provably false about matters of purely private significance” can be actionably defamatory. Restatement (Second) of Torts § 566 (A.L.I. 1977) (opinion “is actionable only if it implies the allegation of undisclosed defamatory facts” (emphasis added)); § 4:2.4 Sack on Defamation, 4-25–26; see also Robert D. Sack, Protection of Opinion Under the First Amendment: Reflections on Alfred Hill, “Defamation and Privacy Under the First Amendment”, 100 Colum. L. Rev. 294, 329–30 (2000).

If anti-deepfake laws addressed only false statements of fact, it would make no sense for them to provide for liability even when the deepfake is accompanied by a disclaimer that effectively communicates that it is unauthorized and ahistorical. Of course, the presence of a disclaimer does not render a statement nondefamatory per se.101New Times, Inc. v. Isaacks, 146 S.W.3d 144, 160–61 (Tex. 2004).

But some disclaimers surely make it impossible for any reasonable viewer to interpret a deepfake “as stating actual facts about an individual.”102Milkovich v. Lorain J. Co., 497 U.S. 1, 20 (1990) (quotation marks omitted) (quoting Hustler Mag., Inc. v. Falwell, 485 U.S. 46, 50 (1988)); see Stanton v. Metro Corp., 438 F.3d 119, 128 (1st Cir. 2006).

To declare disclaimers legally irrelevant is to declare that legally cognizable harm occurs even when no reasonable viewer could understand a deepfake as stating actual facts about the individual depicted. In other words, it is to acknowledge that legally cognizable harm occurs even when a deepfake is non-defamatory as a matter of law.

The Senate made this precise acknowledgement in late July 2024, when it passed a version of the DEFIANCE Act that had been amended with a finding that “individuals depicted in [sexually explicit] digital forgeries are profoundly harmed when the content is produced, disclosed, or obtained without the consent of those individuals. These harms are not mitigated through labels or other information that indicates that the depiction is fake.”103DEFIANCE Act, S. 3696, 118th Cong. § 2(3) (2024) (as amended by S. Amend. 3049 and passed by Senate, July 23, 2024) (emphasis added).

In a decision published two days later, Meta’s Oversight Board made the same observation.104Explicit AI Images of Female Public Figures, Oversight Bd. (July 25, 2024), https://oversightboard.com/decision/bun-7e941o1n [perma.cc/8X7Y-C84G].

Popular attitudes, too, corroborate that the perceived harms of deepfakes are about something more than defamation. One study asked participants to evaluate a hypothetical nonconsensual, pornographic deepfake that was labeled as false and found that “[t]here was no significant effect of labeling on the perceived harmfulness or blameworthiness of the video.”105Matthew B. Kugler & Carly Pace, Deepfake Privacy: Attitudes and Regulation, 116 Nw. U. L. Rev. 611, 636, 640 (2021).

Deepfakes fuel deep outrage that is distinct from their capacity to misinform.106Daniel Immerwahr gets it exactly right when he observes,

In worrying about deepfakes’ potential to supercharge political lies and to unleash the infocalypse, moreover, we appear to be miscategorizing them. . . . Their role better resembles that of cartoons, especially smutty ones. Manipulated media is far from harmless, but its harms have not been epistemic. Rather, they’ve been demagogic, giving voice to what the historian Sam Lebovic calls “the politics of outrageous expression.”

Daniel Immerwahr, What the Doomsayers Get Wrong About Deepfakes, New Yorker (Nov. 13, 2023), https://newyorker.com/magazine/2023/11/20/a-history-of-fake-things-on-the-internet-walter-j-scheirer-book-review [perma.cc/FV9Q-4K3T] (quoting Sam Lebovic, Fake News, Lies, and Other Familiar Problems, 4 J. Free Speech L. 513, 516 (2024)).

2. Anti-Deepfake Laws Are Not Information-Privacy Laws

Just as deepfakes’ harms are often compared to defamatory harms, they are also compared to information-privacy harms. Prohibitions on disseminating “revenge porn”—authentic photographs and video recordings of victims naked or engaged in sexual conduct—are a frequent parallel. In many respects, this comparison is an apt one: Both revenge porn and deepfakes “turn[] individuals into objects of sexual entertainment against their will, causing intense distress, humiliation, and reputational injury.”107Franks & Waldman, supra note 20, at 893.

But revenge porn authentically records private events and thus falls in the heartland of information-privacy regulation, whereas deepfakes do not authentically record private facts—and often do not purport to.

Although some definitions of “revenge porn” refer to “images” without differentiation, jurists consistently employ the term to refer to photographs and video recordings—which record true, private facts about a person’s intimate appearance and activities—rather than to refer to, say, oil paintings and charcoal sketches that depict nudity.108For example, Citron and Franks’s article on revenge porn defines the material as “sexually graphic images of individuals [distributed] without their consent,” which is broad enough to include sketches and paintings in addition to photographs and video recordings. Danielle Keats Citron & Mary Anne Franks, Criminalizing Revenge Porn, 49 Wake Forest L. Rev. 345, 346 (2014). But its exclusive focus is on photographs and video recordings: Every example of nonconsensual pornography that the article discusses involves photographs or video recordings, and it uses phrasing that equates “images” with photographs. See, e.g., id. at 359–60 (“took the image herself” and “took the photo herself” used interchangeably). For a notable counterexample that describes a written account of a sexual encounter as “revenge porn,” see Caitlin Flanagan, The Humiliation of Aziz Ansari, Atlantic (Jan. 14, 2018), https://theatlantic.com/entertainment/archive/2018/01/the-humiliation-of-aziz-ansari/550541 [perma.cc/U64F-HLLT].

Revenge-porn statutes often specify that their coverage is limited to recordings rather than hypothetical depictions of what a victim might have done, or how her body might look.109See, e.g., N.J. Stat. Ann. § 2C:14-9(c) (West 2025) (“photograph, film, videotape, recording or any other reproduction of the image”); Cal. Civ. Code § 1708.85 (West 2025) (“a photograph, film, videotape, recording, or any other reproduction of another”).

Thus, revenge-porn bans fit in comfortably with information-privacy regulation because their core function is to restrict the circulation of records of private facts. The harm of revenge porn derives not just from how it looks, but from its ability to document private facts. Resemblance to private facts alone is insufficient. A dead ringer for a victim of revenge porn doesn’t gain a legal claim just by virtue of being a doppelganger; applicable statutes specify that it is “the person depicted” who possesses the cause of action.110See, e.g., 740 Ill. Comp. Stat. 190/10 (2024).

Analogously, jurists have justified revenge-porn bans in part through comparisons to criminal voyeurism.111State v. Katz, 179 N.E.3d 431, 458 (Ind. 2022) (“The invasion of privacy [from voyeurism] is similar to the invasion from nonconsensual pornography—that is, an individual should be able to control and consent to the situations in which their private areas are viewed and captured by another person.”).

Both revenge porn and voyeurism involve not merely a portrayal of a victim’s private affairs, but actual, nonconsensual access to those private affairs. The victim of a voyeur is the person whom the voyeur actually observes, just as the immediate victim of revenge porn is the person actually recorded in the images.

By contrast, anti-deepfake laws regulate resemblance rather than documentation. The statutes regulate deepfakes without regard to the facts they disclose about the victim. Anti-deepfake laws are thus not information-privacy laws—but this does not mean that anti-deepfake laws aren’t privacy laws. Legislators and scholars frequently, and justifiably, characterize deepfakes’ harms as privacy harms.112DEFIANCE Act, S. 3696, 118th Cong. § 2(4) (as amended by S. Amend. 3049 and passed by Senate, July 23, 2024) (“the privacy of . . . victims is violated”); Natalie Lussier, Nonconsensual Deepfakes: Detecting and Regulating This Rising Threat to Privacy, 58 Idaho L. Rev. 353 (2022); Citron, supra note 6, at 1924–25 (discussing the harm of nonconsensual, pornographic deepfakes as a “sexual-privacy invasion”); Congressman Joe Morelle Authors Legislation to Make AI-Generated Deepfakes Illegal, U.S. Congressman Joseph Morelle (May 5, 2023), http://morelle.house.gov/media/press-releases/congressman-joe-morelle-authors-legislation-make-ai-generated-deepfakes [perma.cc/U34Q-HLA5] (announcing “legislation to protect the right to privacy online amid a rise of artificial intelligence (AI) and digitally-manipulated content”); S.B. 309, 31th Leg., Reg. Sess. (Haw. 2021) (discussing “privacy issues . . . including . . . deep fake technology”); cf. Danielle Citron & Mary Anne Franks, Evaluating New York’s “Revenge Porn” Law: A Missed Opportunity to Protect Sexual Privacy, Harv. L. Rev. Blog (Mar. 19, 2019), https://harvardlawreview.org/blog/2019/03/evaluating-new-yorks-revenge-porn-law-a-missed-opportunity-to-protect-sexual-privacy [perma.cc/F9V7-9APL] (discussing revenge porn, not deepfakes).

Danielle Citron describes the harm of deepfakes as a hijacking of identity: They “mak[e] [a subject] be a sexual object in ways that [she] didn’t choose. . . . [I]t takes your sexual identity and exposes it in ways you didn’t choose.”113Brian Feldman, MacArthur Genius Danielle Citron on Deepfakes and the Representative Katie Hill Scandal, Intelligencer (Oct. 31, 2019), https://nymag.com/intelligencer/2019/10/danielle-citron-on-the-danger-of-deepfakes-and-revenge-porn.html [perma.cc/G5JD-8389].

Having this autonomy wrenched away, Citron argues, interferes with liberty, autonomy, and self-development.114Citron, supra note 6, at 1884–86; cf. Citron & Franks, supra note 108, (discussing revenge porn, not deepfakes).

Citron is justified in characterizing the harms of deepfakes as privacy harms, but she is justified by a theory of privacy that the fact-based rubric of information privacy elides and that anti-deepfake statutes conceal by using the vocabulary of facts. What makes deepfakes harmful is that they appropriate a person’s likeness in a highly offensive way.

3. Anti-Deepfake Laws Ban Highly Offensive Appropriations of Likeness